Positron AI Raises $51.6 Million to Revolutionize Inference-Optimized Hardware and Scale Frontier AI Models

Positron AI, a trailblazer in American-made semiconductors and inference hardware, has announced the close of a $51.6 million oversubscribed Series A funding round, bringing its total capital raised this year to over $75 million. The round was spearheaded by Valor Equity Partners, Atreides Management, and DFJ Growth, with additional investments from Flume Ventures (backed by tech icon Scott McNealy), Resilience Reserve, 1517 Fund, and Unless. This infusion of capital underscores the growing demand for specialized, cost-efficient solutions to address the escalating challenges of AI infrastructure.

The new funding will support the continued deployment of Positron’s first-generation product, Atlas, and accelerate the development of its next-generation systems, including the Titan platform slated for release in 2026. With global tech firms projected to spend over $320 billion on AI infrastructure in 2025, enterprises are grappling with skyrocketing costs, power constraints, and chronic shortages of NVIDIA GPUs. Positron’s purpose-built hardware offers a compelling alternative, delivering significant cost and efficiency advantages through specialization.

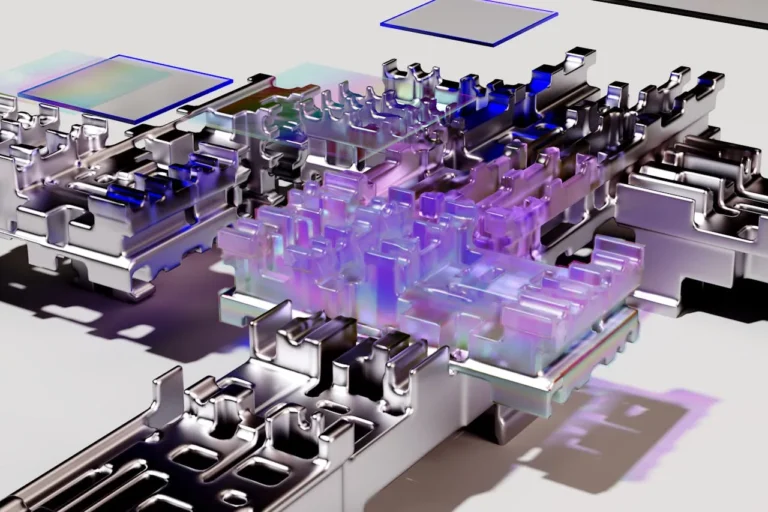

Redefining AI Inference with Atlas

At the heart of Positron’s innovation is Atlas, its first-generation product that is already shipping to customers. Atlas leverages a memory-optimized FPGA-based architecture to deliver 3.5x better performance-per-dollar and up to 66% lower power consumption compared to NVIDIA’s H100. Unlike general-purpose GPUs, Atlas is designed exclusively to accelerate and serve generative AI applications, achieving 93% bandwidth utilization—far surpassing the typical 10–30% seen in GPU-based systems. This breakthrough allows Atlas to support models with up to half a trillion parameters in a single 2-kilowatt server.

Atlas is fully compatible with Hugging Face transformer models and serves inference requests through an OpenAI API-compatible endpoint. Powered by chips fabricated in the U.S., it is already deployed in production environments, enabling large language model (LLM) hosting, generative agents, and enterprise copilots with significantly reduced latency and hardware overhead. These capabilities make Atlas a game-changer for organizations seeking to scale their AI operations without breaking the bank or exceeding power budgets.

Addressing Critical Bottlenecks in AI Infrastructure

“Memory bandwidth and capacity are two of the key limiters for scaling AI inference workloads for next-generation models,” said Dylan Patel, founder and CEO of SemiAnalysis, a leading research firm specializing in semiconductors and AI infrastructure. Patel, who serves as an advisor and investor in Positron, highlighted the company’s unique approach to solving these challenges. “Positron is taking a novel approach to the memory scaling problem, and with its next-generation chip, can deliver more than an order of magnitude greater high-speed memory capacity per chip than incumbent or upstart silicon providers.”

The implications of this innovation are profound. By generating 3x more tokens per watt than existing GPUs, Positron not only reduces operational costs but also multiplies the revenue potential of data centers. As Randy Glein, co-founder and managing partner at DFJ Growth, explained, “Improving the cost and energy efficiency of AI inference is where the greatest market opportunity lies, and this is where Positron is focused. Their innovative approach to AI inference chip and memory architecture removes existing bottlenecks on performance and democratizes access to the world’s information and knowledge.”

A Capital-Efficient Path to Breakthrough Innovation

Founded in 2023 by CTO Thomas Sohmers and Chief Scientist Edward Kmett, Positron AI has achieved remarkable progress in just 18 months. With only $12.5 million in seed funding, the team brought Atlas to market, validated its performance, secured early enterprise customers, and hardened the product in real-world deployments—all before raising this Series A round. Mitesh Agrawal, former Lambda COO, joined as CEO to scale the company’s commercial operations and drive widespread adoption.

“Our mission at Positron is to meet the demands of modern AI: running frontier models at the lowest cost per token generation while offering the highest memory capacity of any chip available,” said Agrawal. “Our highly optimized silicon and memory architecture allows for superintelligence to be run in a single system, targeting up to 16-trillion-parameter models with tens of millions of tokens of context length or memory-intensive video generation models.”

Early Enterprise Adoption and Strategic Vision

Positron’s first publicly announced customers include Parasail (with SnapServe) and Cloudflare, alongside deployments within other major enterprises and leading neocloud providers. These early adopters highlight the versatility and value of Positron’s solutions in addressing real-world challenges.

With the Series A funding secured, Positron is advancing its next-generation system, Titan, powered by custom silicon codenamed ‘Asimov.’ Titan will feature up to two terabytes of directly attached high-speed memory per accelerator, enabling it to run up to 16-trillion-parameter models on a single system. This represents a massive leap in scalability and context limits for the world’s largest models. Additionally, Titan will support parallel hosting of multiple agents or models, eliminating the traditional 1:1 model-to-GPU constraint that hinders efficiency.

Its over-provisioned, inference-tuned networking architecture ensures low-latency, high-throughput performance even under heavy concurrency. Designed for seamless integration into existing infrastructures, Titan requires no exotic cooling and adheres to standard data center form factors.

A Defensible Niche in Low-Cost Inference

Gavin Baker, managing partner and chief investment officer of Atreides Management, praised Positron’s strategic focus. “We have passed on the overwhelming majority of AI accelerator startups we have diligenced over the last six years, as most were mounting frontal assaults on NVIDIA that were unlikely to succeed. Positron has carefully chosen a defensible niche in low-cost inference. More importantly, they have proven that their software works before developing an ASIC: Positron is running competitively priced production inference workloads today on 2022-era FPGAs in a server of their own design. This speaks to the quality of their software stack, their system-level expertise, and the judgment of their management team.”

Pioneering a New Era of American AI Infrastructure

Positron AI’s innovations are setting a new standard for American AI infrastructure. By addressing the critical bottlenecks of memory, power, and cost, the company is empowering enterprises to unlock the full potential of frontier AI models. With its groundbreaking hardware and unwavering commitment to excellence, Positron is poised to lead the charge in transforming how AI is deployed and scaled globally. As the demand for efficient, scalable AI solutions continues to grow, Positron’s technology promises to reshape the landscape of artificial intelligence—and ensure that the future of AI remains firmly rooted in innovation and accessibility.

About Positron AI

Positron AI is transforming generative AI inference with energy-efficient, high-performance compute systems built entirely in the United States. Headquartered in Reno, Nevada, with a remote-first team distributed across the country, Positron’s unique hardware architecture offers the lowest total cost of ownership for transformer models by solving the power, memory, and scalability bottlenecks of legacy infrastructure.