New platform enables agencies to execute end-to-end creative projects across text, image, video, and audio

Luma today announced the launch of Luma Agents, a new class of AI collaborators capable of executing end-to-end creative work across text, image, video, and audio. Built on Luma’s new Unified Intelligence architecture, the platform is designed for agencies, marketing teams, studios, and enterprise organizations seeking to scale creative output without sacrificing quality. Luma Agents maintain full context from initial brief to final delivery, coordinating tools, models, and iterations within a single unified system. Global enterprise partners including Publicis Groupe and Serviceplan Group have already deployed Luma Agents across their operations.

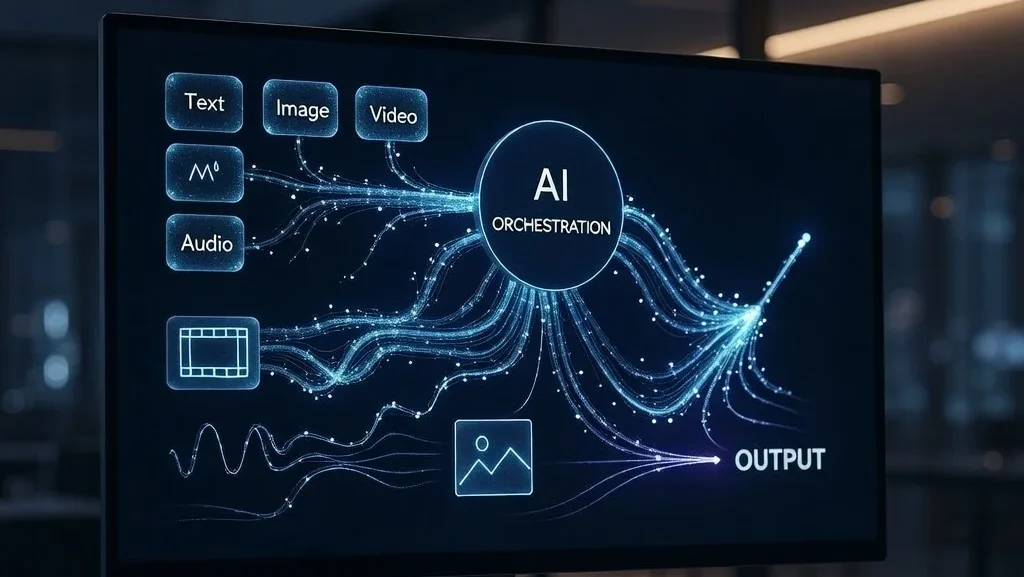

The launch addresses a fundamental limitation in current AI creative workflows. Most AI systems today are assembled by chaining together separate models for language, vision, video, and reasoning, stitching outputs together through orchestration layers. While powerful in isolation, these approaches fragment context and require increasingly complex workflows to produce reliable creative results. Luma’s Unified Intelligence architecture takes a different approach, training a single multimodal reasoning system capable of understanding and generating across formats within the same architecture.

Key Insights at a Glance

- Unified Intelligence Architecture: A single multimodal reasoning system capable of understanding and generating across text, image, video, and audio within the same model, eliminating context fragmentation.

- End-to-End Execution: Agents maintain shared context from planning through production and delivery, advancing multiple creative directions in parallel while evaluating and refining outputs iteratively.

- Global Enterprise Deployment: Publicis Groupe and Serviceplan Group are deploying Luma Agents across strategy, creative development, and production workflows across more than 20 countries.

- Multi-Model Coordination: Agents automatically select and route tasks to leading AI models including Ray3.14, Veo 3, Sora 2, Kling 2.6, and ElevenLabs while maintaining persistent context.

Uni-1: Reasoning and Imagination in a Single Architecture

The first model built on Luma’s Unified Intelligence architecture is Uni-1, a decoder-only autoregressive transformer operating over a shared token space that interleaves language and image tokens. This design enables both modalities to function as first-class inputs and outputs in the same sequence, allowing the model to reason in language while imagining and rendering in pixels within a single forward pass. Rather than separating thinking from creation, Uni-1 tightly couples reasoning and rendering, enabling the system to plan, imagine, and produce as part of one coherent cognitive process. “Intelligence shouldn’t be fragmented by modality,” said Amit Jain, Co-Founder and CEO of Luma. “Unified systems reason holistically. When the same model can think, imagine, and render, you move closer to intelligence that behaves coherently across the entire creative process.”

Creative Agents That Replace Fragmented Workflows

Luma Agents replace fragmented, multi-model workflows with coordinated execution built on unified reasoning. Instead of switching between disconnected tools and rebuilding context at every step, teams work alongside agents that execute projects end-to-end, maintain shared context across formats, advance multiple creative directions in parallel, and evaluate outputs rather than generating one-shot results. The agents operate inside a collaborative, multiplayer environment where humans direct creative intent while agents handle orchestration, routing, and execution. This architecture enables agents to coordinate across leading AI models including Ray3.14, Veo 3, Sora 2, Kling 2.6, Nano Banana Pro, Seedream, GPT Image 1.5, and ElevenLabs, automatically selecting and routing tasks to the best model or capability for each step while maintaining persistent context across assets and iterations.

Enterprise Adoption Across Global Agency Networks

Luma Agents are already embedded across global agency operations. Publicis Groupe and Serviceplan Group are deploying the platform across strategy, creative development, and production workflows to increase throughput while maintaining brand consistency across markets. “Luma is now part of our broader House of AI ecosystem and integrated directly into our creative workflows. It allows our teams across more than 20 countries to collaborate more smoothly and develop great work faster,” said Alexander Schill, Global CCO at Serviceplan Group. “For our clients, that means high-quality creative output delivered with greater speed and efficiency – without compromising craft.” Luma Agents include enterprise safeguards such as full IP ownership retained by customers, automated content review to reduce copyright risk, legal trace documentation demonstrating human involvement, and required human review workflows prior to public release. “Creative work has never lacked ambition; it’s lacked execution capacity,” added Jain. “Creative teams shouldn’t have to spend their time orchestrating tools. They should spend it creating. Agents aren’t shortcuts. They’re collaborators that maintain context, coordinate execution, and advance projects so teams can focus on taste, direction, and strategy.”

Future Outlook

Luma’s Unified Intelligence architecture represents a fundamental shift in how AI systems approach creative work. By training a single model capable of reasoning and generating across modalities rather than stitching together specialized components, the platform addresses the context fragmentation that has limited AI’s utility in complex creative workflows. The early deployment with major agency networks suggests that enterprise demand for end-to-end AI creative collaborators is accelerating. As Uni-1 and subsequent models evolve, the tight coupling of reasoning and rendering could enable increasingly sophisticated creative capabilities that more closely mirror human cognitive processes. The enterprise-grade safeguards built into Luma Agents also reflect growing client requirements around intellectual property protection and compliance in AI-generated content.

Conclusion

Luma Agents introduce a new category of AI collaborators capable of executing complete creative projects while maintaining context from brief to delivery. By building on Unified Intelligence architecture that integrates reasoning and generation within a single system, Luma enables agencies and enterprises to scale creative output without sacrificing quality or brand consistency. Global partners including Publicis Groupe and Serviceplan Group are already deploying the platform across their operations.

Join the conversation in the comments below.

About Luma

Luma, based in Palo Alto, California, builds unified multimodal AI systems that combine reasoning and generation within a single model architecture. Its Unified Intelligence platform powers agents capable of planning and producing end-to-end creative work across video, imagery, and 3D, serving teams at leading advertising agencies, global enterprises, and entertainment studios. In 2025, Luma launched Ray3, the world’s first reasoning video model, followed by Ray3.14, delivering native 1080p outputs and production-grade stability for professional workflows. The company is backed by HUMAIN, Andreessen Horowitz, AWS, AMD Ventures, NVIDIA, Amplify Partners, Matrix Partners, and leading investors across technology and entertainment. For more information, visit www.lumalabs.ai

Source link: https://www.businesswire.com/