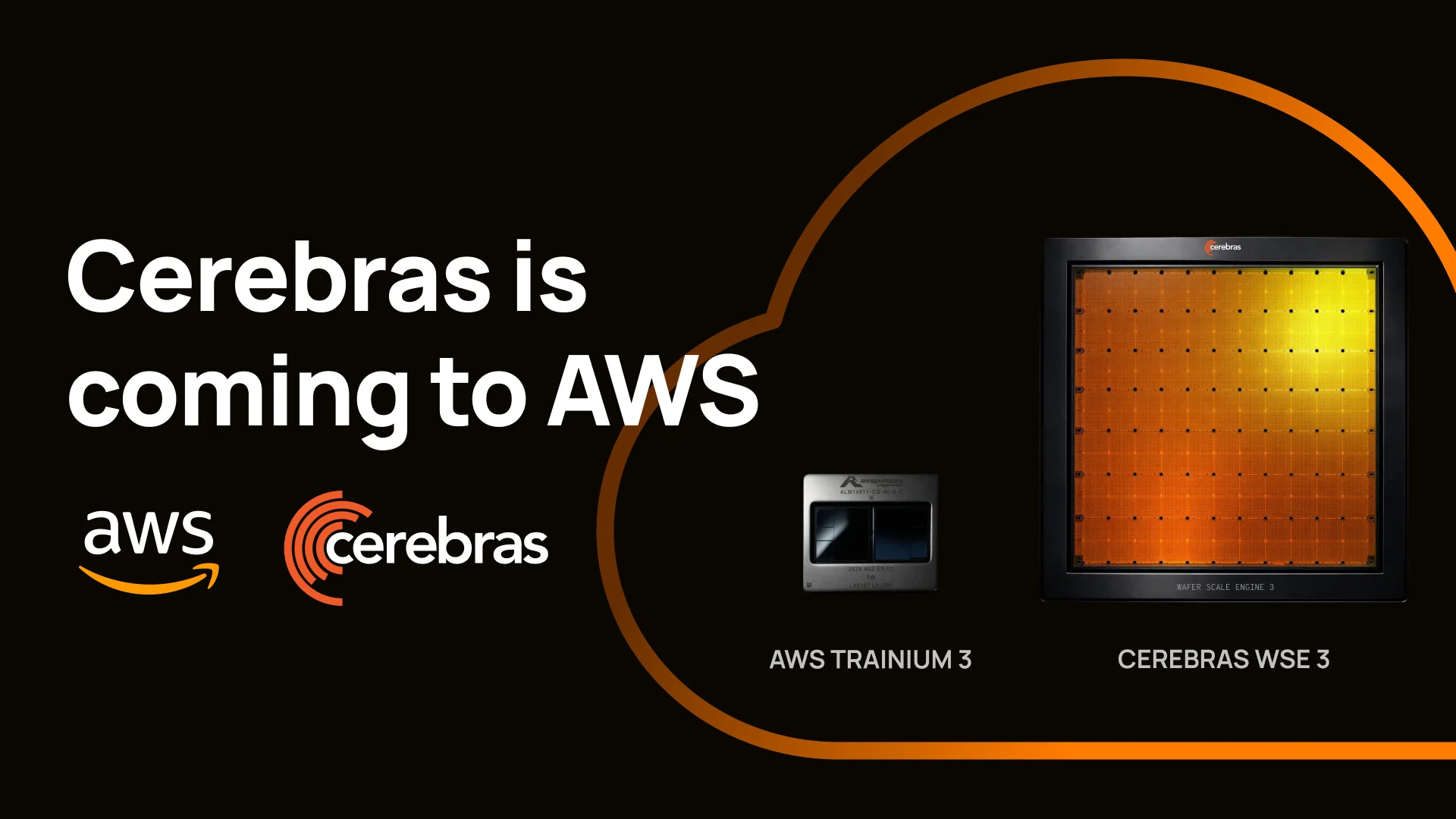

AWS and Cerebras Unveil the Fastest AI Inference Solution for Generative AI and LLM Workloads

How can businesses stay ahead in the race for AI innovation? Amazon Web Services (AWS) and Cerebras Systems are teaming up to deliver the fastest AI inference solution available, set to launch in the coming months. This groundbreaking collaboration will significantly enhance the speed and performance of generative AI applications and large language models (LLMs), addressing a critical bottleneck in real-time coding assistance and interactive applications.

Why Inference Speed Matters in AI

Inference is the linchpin of AI’s practical value, but it remains a significant bottleneck, especially for real-time applications. The computational demands of inference, particularly in tasks like real-time coding assistance and interactive applications, require unparalleled speed and performance. According to David Brown, Vice President of Compute & ML Services at AWS, “Inference is where AI delivers real value to customers, but speed remains a critical bottleneck for demanding workloads like real-time coding assistance and interactive applications.”

The Disaggregated Inference Solution

Just as a relay race requires each runner to excel in their specific leg, AWS and Cerebras have designed a solution that optimizes each stage of AI inference. The Trainium + CS-3 system splits the inference workload into two distinct stages: prefill and decode. Prefill, which is computationally intensive and parallel, is handled by AWS Trainium, while decode, which is serial and memory bandwidth-intensive, is managed by the Cerebras CS-3. This strategic disaggregation ensures that each processor operates at peak efficiency, delivering an order of magnitude faster inference than current solutions.

AWS and Cerebras: A Pioneering Cloud Collaboration

AWS and Cerebras are breaking new ground with this collaboration. AWS Trainium, Amazon’s purpose-built AI chip, is designed to deliver scalable performance and cost efficiency for a wide range of generative AI workloads. Meanwhile, the Cerebras CS-3, the world’s fastest AI inference system, provides unparalleled memory bandwidth. Together, these technologies will be deployed in AWS data centers and accessed through Amazon Bedrock, ensuring that enterprises can benefit from this cutting-edge solution within their existing AWS environment.

Future Outlook

The future of AI inference is poised to evolve rapidly, and AWS and Cerebras are at the forefront. Later this year, AWS will also offer leading open-source LLMs and Amazon Nova using Cerebras hardware, further expanding the capabilities of the disaggregated inference solution. As Andrew Feldman, Founder and CEO of Cerebras Systems, notes, “Every enterprise around the world will be able to benefit from blisteringly fast inference within their existing AWS environment.”

Conclusion

This collaboration between AWS and Cerebras marks a significant leap forward in AI inference speed and performance. For businesses, this means the ability to deliver more responsive and interactive AI applications, enhancing user experiences and driving innovation. How is your organization preparing to leverage this new technology? Join the conversation in the comments below.

About Amazon Web Services

Amazon Web Services (AWS) is guided by customer obsession, pace of innovation, commitment to operational excellence, and long-term thinking. By democratizing technology for nearly two decades and making cloud computing and generative AI accessible to organizations of every size and industry, AWS has built one of the fastest-growing enterprise technology businesses in history. Millions of customers trust AWS to accelerate innovation, transform their businesses, and shape the future. With the most comprehensive AI capabilities and global infrastructure footprint, AWS empowers builders to turn big ideas into reality. Learn more at aws.amazon.com and follow @AWSNewsroom.

About Cerebras Systems

Cerebras Systems builds the fastest AI infrastructure in the world. We are a team of pioneering computer architects, computer scientists, AI researchers, and engineers of all types. We have come together to make AI blisteringly fast through innovation and invention because we believe that when AI is fast it will change the world. Our flagship technology, the Wafer Scale Engine 3 (WSE-3) is the world’s largest and fastest AI processor. 56 times larger than the largest GPU, the WSE uses a fraction of the power per unit compute while delivering inference and training more than 20 times faster than the competition. Leading corporations, research institutes and governments on four continents chose Cerebras to run their AI workloads. Cerebras solutions are available on premise and in the cloud, for further information, visit cerebras.ai or follow us on LinkedIn, X and/or Threads.

Source link: https://www.businesswire.com/