Intel Corporation and Google Expand Strategic Partnership to Advance Next-Generation AI Infrastructure

In a significant move aimed at shaping the future of artificial intelligence and cloud computing, Intel Corporation and Google have announced an expanded multiyear collaboration to co-develop and optimize next-generation AI infrastructure. This partnership underscores the growing importance of balanced, heterogeneous computing architectures that integrate central processing units (CPUs) with specialized accelerators to meet the escalating demands of modern AI workloads.

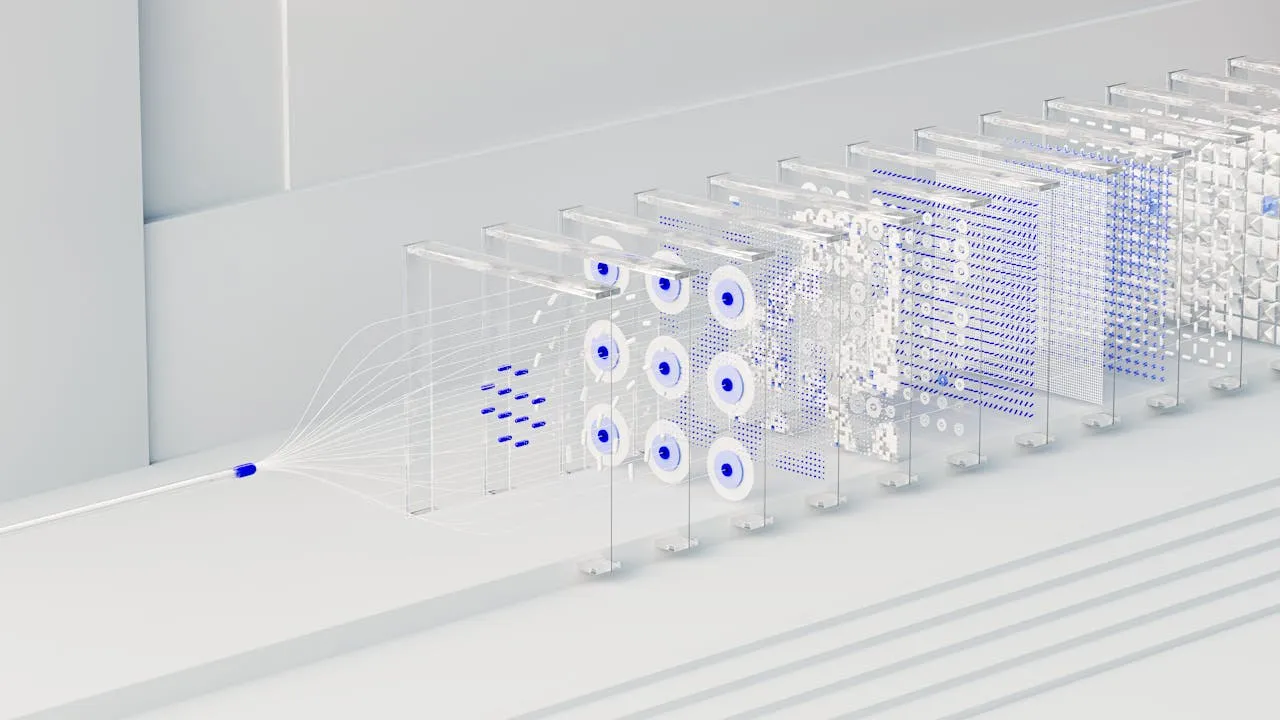

As artificial intelligence adoption accelerates across industries, the underlying infrastructure supporting these workloads is becoming increasingly complex. Traditional models that relied heavily on standalone accelerators, such as GPUs, are evolving into integrated systems where CPUs, accelerators, and networking components work in tandem. Intel and Google’s collaboration is centered on addressing this shift by enhancing system-level performance, efficiency, and scalability.

The Rising Importance of Heterogeneous AI Systems

Modern AI workloads—ranging from large-scale model training to real-time inference—require a diverse set of computational resources. While accelerators handle intensive parallel processing tasks, CPUs play a critical role in orchestrating workflows, managing data pipelines, and ensuring overall system coordination.

This has led to the emergence of heterogeneous computing environments, where multiple types of processors are combined to deliver optimal performance. In such architectures, CPUs act as the central control layer, coordinating interactions between accelerators, memory, and storage systems.

Intel and Google recognize that scaling AI is not simply about adding more accelerators. Instead, it requires a holistic approach that balances compute resources across the entire system. By aligning their technologies across multiple generations of Intel’s processor roadmap, the two companies aim to deliver infrastructure that can efficiently handle the growing complexity of AI applications.

Strengthening the Role of Intel Xeon Processors

A key component of this collaboration is the continued deployment and optimization of Intel® Xeon® processors within Google’s global data center infrastructure. Google Cloud already relies extensively on Xeon-based systems to power its workload-optimized instances, including the latest Intel Xeon 6 processors.

These processors are designed to support a wide range of applications, from coordinating distributed AI training workloads to enabling low-latency inference and general-purpose cloud computing. By optimizing Xeon processors for Google’s specific requirements, the partnership aims to enhance performance while reducing energy consumption and operational costs.

The focus on total cost of ownership (TCO) is particularly important in hyperscale environments, where even small efficiency gains can translate into significant cost savings. Improvements in power efficiency, workload optimization, and system utilization are critical for maintaining sustainable and scalable cloud operations.

Expanding Co-Development of Infrastructure Processing Units

In addition to CPUs, the collaboration places a strong emphasis on the development of custom infrastructure processing units (IPUs). These application-specific integrated circuits (ASICs) are designed to offload infrastructure-related tasks from host CPUs, thereby improving overall system efficiency.

IPUs handle functions such as networking, storage management, and security—tasks that traditionally consume valuable CPU resources. By delegating these responsibilities to dedicated hardware, cloud providers can free up CPU capacity for core computational workloads.

This approach not only improves resource utilization but also enhances performance predictability. In large-scale AI environments, consistent performance is essential for maintaining reliability and meeting service-level agreements.

The co-development of IPUs between Intel and Google represents a strategic effort to create tightly integrated systems where CPUs and accelerators work seamlessly together. This integration is key to building scalable infrastructure capable of supporting next-generation AI applications.

Enabling Scalable and Efficient Data Center Architectures

The combination of Xeon CPUs and IPUs forms the foundation of a modern data center architecture that prioritizes efficiency, flexibility, and scalability. By balancing general-purpose computing with specialized acceleration, this approach addresses the limitations of traditional infrastructure models.

One of the primary advantages of this architecture is its ability to scale without significantly increasing complexity. As AI workloads grow in size and sophistication, maintaining manageable system complexity becomes a critical challenge. Integrated solutions that streamline operations and reduce overhead are essential for sustaining long-term growth.

Furthermore, the use of IPUs allows for more efficient resource allocation. By offloading infrastructure tasks, organizations can achieve higher effective compute capacity without adding additional hardware. This not only reduces costs but also minimizes energy consumption, contributing to more sustainable data center operations.

Driving Performance and Efficiency at Hyperscale

The collaboration between Intel and Google is particularly focused on addressing the needs of hyperscale environments, where infrastructure must support millions of users and vast amounts of data.

Lip-Bu Tan, CEO of Intel, emphasized that the future of AI infrastructure lies in balanced system design. According to him, achieving optimal performance requires more than just powerful accelerators—it demands a coordinated approach that integrates CPUs, accelerators, and networking components into a cohesive system.

Similarly, Amin Vahdat, Senior Vice President and Chief Technologist for AI Infrastructure at Google, highlighted the enduring importance of CPUs and infrastructure acceleration in AI systems. From orchestrating training workloads to enabling efficient inference and deployment, these components remain central to the overall performance and reliability of AI applications.

The long-standing partnership between Intel and Google, which spans nearly two decades, provides a strong foundation for this collaboration. By leveraging Intel’s processor roadmap and Google’s expertise in large-scale infrastructure, the two companies are well-positioned to address the evolving demands of the AI landscape.

Supporting the Next Wave of AI Innovation

As AI continues to evolve, new applications and use cases are emerging across industries. From natural language processing and computer vision to autonomous systems and scientific research, the demand for advanced computing capabilities is growing rapidly.

The expanded collaboration between Intel and Google is aimed at supporting this next wave of innovation by providing the infrastructure needed to power these applications. By focusing on open, scalable solutions, the partnership seeks to enable a broader ecosystem of developers, enterprises, and researchers.

Open infrastructure is particularly important for fostering innovation, as it allows organizations to build and deploy applications without being constrained by proprietary systems. By promoting interoperability and flexibility, Intel and Google are helping to create an environment where new ideas can thrive.

Reducing Complexity and Enhancing Flexibility

One of the key challenges in modern AI infrastructure is managing complexity. As systems become more sophisticated, the number of components and interactions increases, making it more difficult to maintain efficiency and reliability.

The integrated approach अपनated by Intel and Google addresses this challenge by simplifying system design. By combining general-purpose CPUs with purpose-built accelerators, the partnership enables a more streamlined architecture that is easier to manage and scale.

This flexibility is crucial for adapting to changing workloads and technological advancements. As new AI models and applications emerge, infrastructure must be able to evolve بسرعة to meet new requirements. The solutions developed through this collaboration are designed to provide the adaptability needed for future growth.

Sustainability is another important consideration in the development of AI infrastructure. Data centers consume significant amounts of energy, and improving efficiency is essential for reducing environmental impact.

By focusing on energy-efficient processors and optimized system architectures, Intel and Google are working to minimize the carbon footprint of their infrastructure. The use of IPUs to offload tasks and improve resource utilization is a key part of this strategy.

These efforts align with broader industry goals to create more sustainable technology solutions while continuing to drive innovation.

The expanded partnership between Intel Corporation and Google represents a significant step forward in the evolution of AI infrastructure. By emphasizing the importance of balanced, heterogeneous systems, the collaboration addresses the growing complexity of modern AI workloads.

Through the integration of Intel Xeon processors and custom infrastructure processing units, the two companies are building a foundation for scalable, efficient, and flexible AI systems. This approach not only enhances performance and reduces costs but also supports the broader ecosystem of developers and enterprises driving innovation.

As the demand for AI continues to grow, partnerships like this will play a crucial role in shaping the future of technology. By combining their expertise and resources, Intel and Google are helping to create the infrastructure needed to power the next generation of AI-driven applications and services.

Source link: https://newsroom.intel.com